A lot has happened since the trailer debut at E3 in June and the feedback we’ve received since then has been warmingly positive, with two awards that stand out, the Game Critics Awards Winner of ‘Best Independent Game’ at E3 and more recently Gold Winner at Clio Entertainment Awards in the Games category.

In the meantime, we’ve been hard at work focusing on the last two major remaining areas; Animation and Voice Acting, which I’ll be talking about today.

Animation

A few weeks ago we had a motion capture session to record what was probably our most technically intensive set of animations; when the cop armlocks an actor, handcuffs him and throws him on the ground. It was our second session with two simultaneous actors, and while the trailer session was focused on long movement sequences, emotions, and line delivery, this one was all about precision and coordination with the goal of recording enough variations that allow for natural navigation in such a small space. From an 8-hour session, we ended up with 144 sets of animation (two actors for each) for a total of 7 minutes of movement!

The actions we recorded were similar to what we did for the single actor movement session that I covered previously, but this time with two characters in unison, making sure feet position and start/end poses match. An example is the ‘Idle to Walk’ set, covering all possible directions an actor can go when he starts to walk:

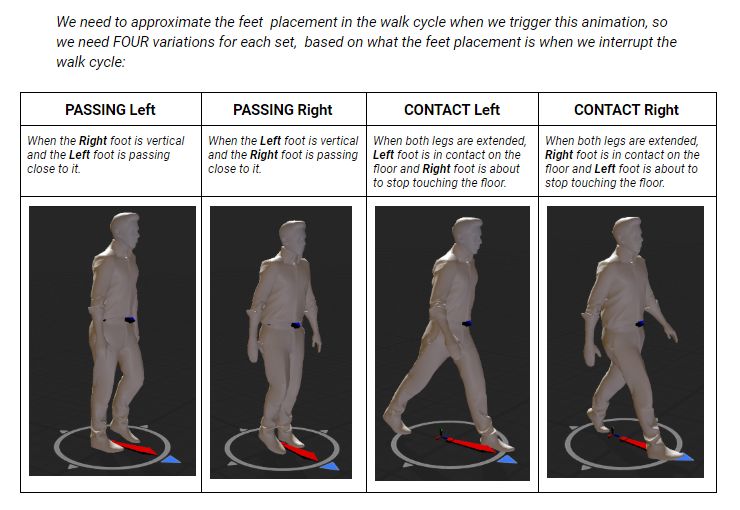

Another set is the ‘Walk to Stop’ when a character decides to stop walking. We had to record every single direction the actor might want to turn when stopping and since a walk cycle can be interrupted at any moment, we also had to take into account the feet position and decided to record four variations per animation, depending on the foot placement.

Here is a glimpse at the documentation that might explain it better:

And some of the animations from that set:

Also since the apartment space is so small, often the actors don’t walk and it’s more natural to just side-step and turn to the position that is right next to them. To cover these cases, we record a ‘Side-step’ set with variation for every angle at 45 degrees and then 8 directions for each of these angles, for a total of 124 unique variations:

We’ve also been slowly integrating more of the generic animations, like sitting down, standing up, or laying in bed.

And as we review all this new content, old sets of animation that felt natural, are now out of place as everything is more realistic. For example, if the wife wants to fill her glass with water from the sink and drink it, it used to be split into 2 unique animations:

- Grab a mug from inventory, fill it with water in the sink and place it back in the inventory.

- Take mug from inventory, drink water and place it back in the inventory.

But in order to make it feel more natural, we now blend between them, so the motion happens in one single movement.

And this is how it looks in-game. The version below also has a third blend where she drops the mug on the counter after drinking.

Another example is if you are sitting on the couch and want to grab/drop an item from the coffee table right next to you. Previously, the actor would get up, walk to the table and grab the item, but in real life, we would just turn and grab the object:

And as we get these new actions, we’re adding smaller details, like seeing the pages turn in the book the wife is reading:

Another result of these more realistic and detailed animations is that they take slightly longer to play and sometimes you might want to interrupt them.

For example, imagine you make the character sit at the table but then change your mind. We can’t interpolate between the ‘sit down’ and ‘stand up’ animations in a natural way since they are so different and it will be frustrating for the player to wait for the sit-down motion to complete before triggering the stand-up. Here is an example of a sit-down and get up uninterrupted:

The solution we found for an immediate interruption that feels natural, is to reverse the sitting animation the moment you choose to stop it. It still feels realistic and fluid, and the secondary motion from the tie helps sell the movement:

Finally, we want you to feel the main character’s emotional progression through the loops (since everything resets but your knowledge), so situations that happen often should have slight variations to show this. For example, the first time you are handcuffed (still lost and trying to fight back) versus the 10th time (where you just don’t care).

Most of these decisions can’t really be planned in advance and will happen during a motion capture session or implementation. This is why the project ends up taking just a ‘tiny’ bit longer. From my previous experience, and this project is proving it again, the last 90% of development holds a lot of critical decisions that can make or break the end result. There are significant changes that can only be made when we see all the pieces together. Decisions that couldn’t have been planned beforehand and it would be sad if we had to give them up (as you usually do in a lot of AAA projects). Fortunately, the nature of the collaboration with Annapurna Interactive allows for that to happen, something that I’m grateful for.

VOICE ACTING

The other production challenge is voice acting. A few weeks ago, we scheduled our first ‘major’ 4-hour recording session so we could go over the whole workflow, from recording to processing the audio files and implementing them in the game.

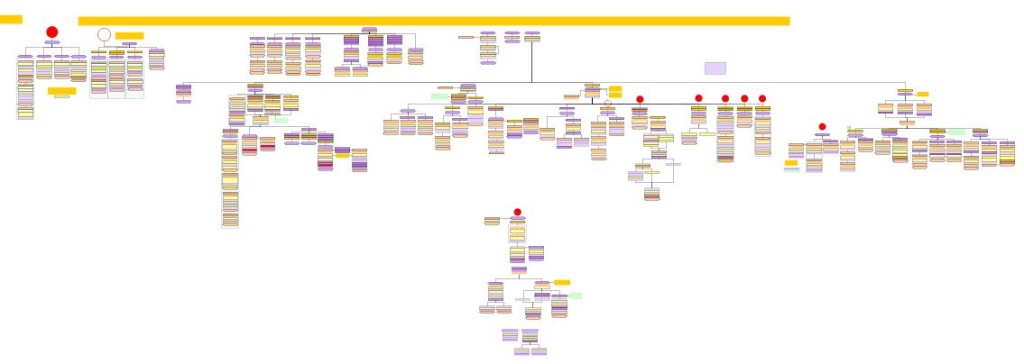

One of the challenges we ran into, was to find a way to provide a readable script for the actors. All the conversations in the game have a lot of branching and variations and are organized like flowcharts, making it very hard to convert to a linear script format. To give you an idea, here is a slice of one of the many sets of dialogues we have in the game:

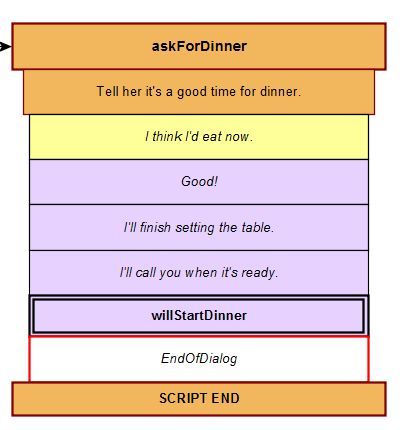

And a zoom-in at one of those blocks (Yellow Node – Husband, Pink Node -Wife):

For a long time, we tried to convert these charts into linear movie-style scripts, but it quickly became a disorganized mess. It’s hard to track who is the parent of a sequence, the emotional state of that set of sequences, and if you want to jump to another interaction that could follow the current one, you can’t quickly find it. But in the flowchart format, everything is neatly organized/parented by the topic and the iterations of that topic as well as being color-coded and searchable.

So for this session, we wanted to see if we could read directly from the charts, so I brought my laptop and shared the screen with 2 iPads, one for each actor. That way I could ‘move’ between the script nodes for what we are recording and the actors used the Ipad as if it was a script, with me ‘turning’ the pages. It also allowed us to update lines if they came up with a better variation on the spot and mark everything we recorded in the same flowchart files that the engine uses to generate the dialogues.

We also used this opportunity to get an overview of how much work and time the whole process will take if we scale it to the full size of the project. We managed to record about 500 lines in a 4-hour session with three actors. Crucial time is used up when you need to change the topic and go over what is the current scene (e.g. ‘This is the wife reacting to the fact that the player has been turning the lights on and off for a minute) or explaining the current emotional state on that sequence (e.g. the player knows about the cop, but not the time loop and he has tried to go over this topic a few times and is out of patience).

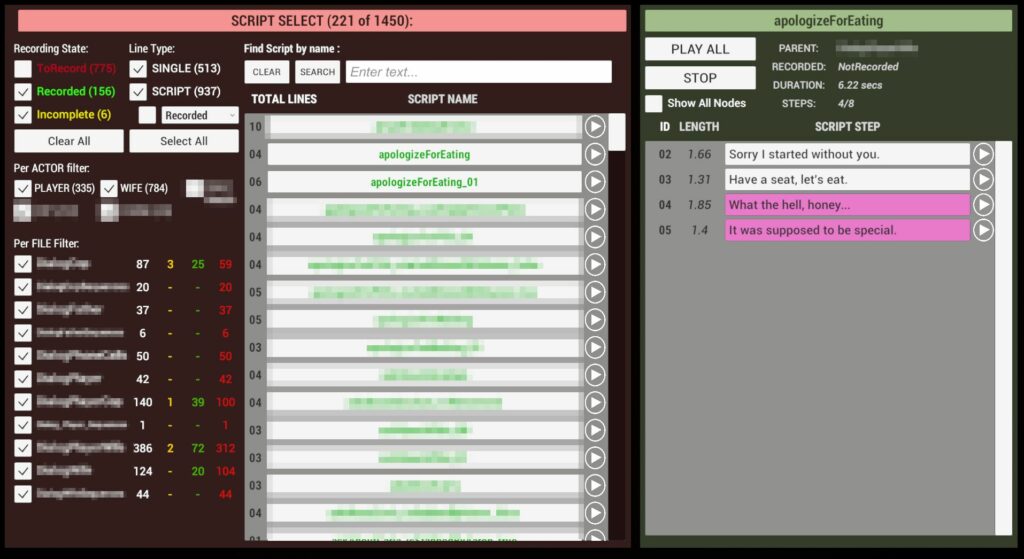

On the integration side, each node that you saw above is a single audio file, and the naming convention allows the engine to know what file to load. We quickly realized we didn’t have a way of reviewing the checked-in files unless we played the game, or opened every single audio file at a time and cross-checked with the flowchart.

Also if we named the file incorrectly or assigned it to another node, there was no way of hunting it down. Even playing the game was too time-consuming, as you often have to restart to hear lines that you would never play in a specific sequence of events.

So last week I focused on creating a Dialogue editor, trying to fix all these issues we had and working with the sound designer to make sure it fits our needs. It was a new and fun process for me and having all the data from the session made it easier to improve on the tool and now I don’t think we can live without it! You can see it below (blurring to prevent spoilers).

The other question I’ve been asked a lot is “When is it coming out?”. We are aiming for a 2020 release, and once we know for sure that we will hit our planned release date, we will announce it here.

Love to see the improvements! Voice acting will definitely make it a much better experience. Good luck with the final stretch of development! 🙂

Can’t wait for it to release

What do you think of your choice of perspective as of now? It was a fundament of concept at the start wasn’t it? But you probably didn’t envision such a highly realistic execution of you drawing board. Is a view from above still the way to go and why? To be constantly conscious of how confined the field is? This is by the way what I LOVE about your project; the small measurements of space and time

At the start, the main reason for the top-down view was to simplify navigation (just a 2D plane) and there was never a good enough reason to change it throughout the development. Very early on, the camera was even isometric and I was toying with keeping it all 2D.

As the project evolved, I realized how it helps the visuals stand out and how it reinforces the apparent simplicity and claustrophobia of the situation the protagonist is in. In the end it became a driving principle for everything, to keep all elements as simple as they need to be.

Wow, nice to see so much work being put into this, really looking forward to the overall product, and maybe get a laugh (or a gasp) at the final details that you decided to pour love into.

Seeing this is makes me more hyped for the game. All of you are doing a fantastic job and I cannot wait to see the release of the game.

From Sweden. I look forward playing this game!!!!!!

I like the concept, love the improvements in movement and am really curious to play this game! Keep it going!

superp, i love timeloop games!

this makes me the most hyped for an indy game since inside. cant wait…

I love how ambitious this project is despite taking place in a very limited space. Looking forward to getting to play it over and over again. Good luck with the future development.